This was imported from LinkedIn

DeepSeek vs. GPT-4: The Future of AI Efficiency

As artificial intelligence continues to revolutionize industries, the conversation around energy efficiency and sustainability in AI development is becoming more important. Two major players in this space—DeepSeek and GPT-4—highlight the trade-offs between raw computational power and energy-conscious innovation.

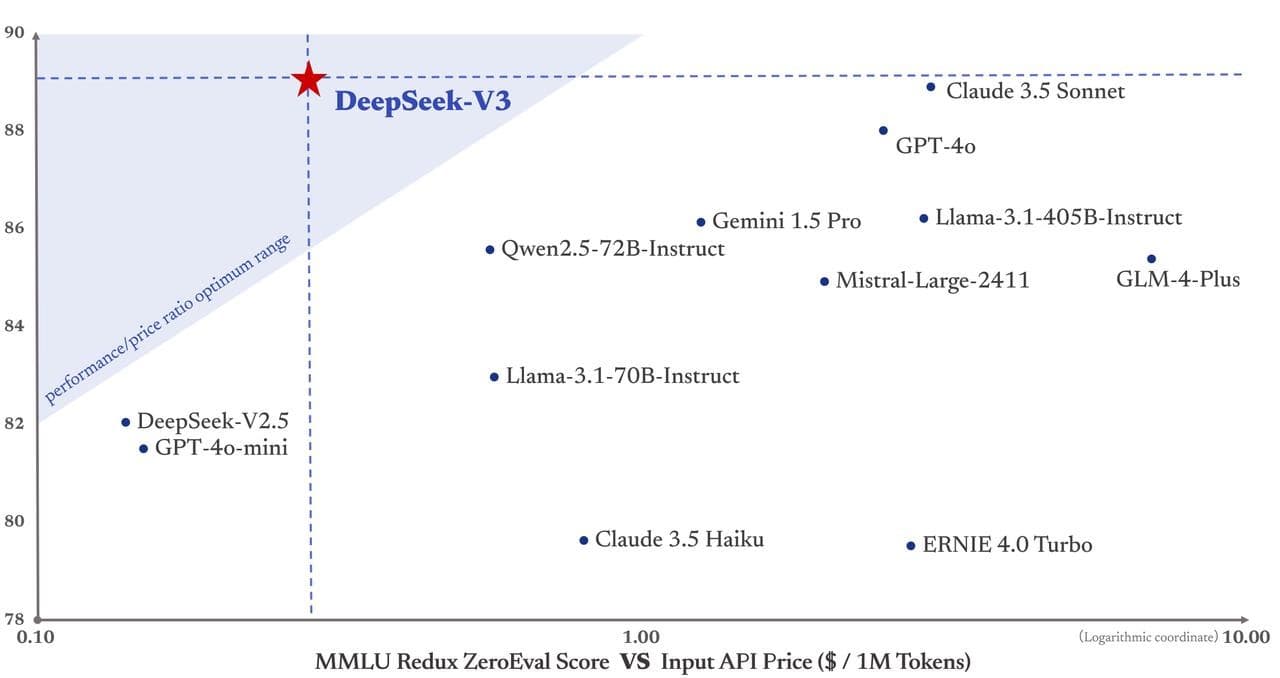

GPT-4, OpenAI's flagship model, is a powerhouse of performance, capable of solving complex problems with remarkable accuracy. However, this comes at a cost. Its massive computational requirements demand significant energy consumption, contributing to high operational costs and a substantial carbon footprint. With development costs estimated at USD 63 million to USD 100 million, GPT-4 represents the pinnacle of resource-intensive AI.

Enter DeepSeek, a new contender designed to disrupt this paradigm. By prioritizing algorithmic efficiency and model optimization, DeepSeek achieves comparable performance while consuming up to 90% less energy and producing 92% fewer emissions. Its leaner architecture also translates to drastically lower development costs— just USD 5.58 million.

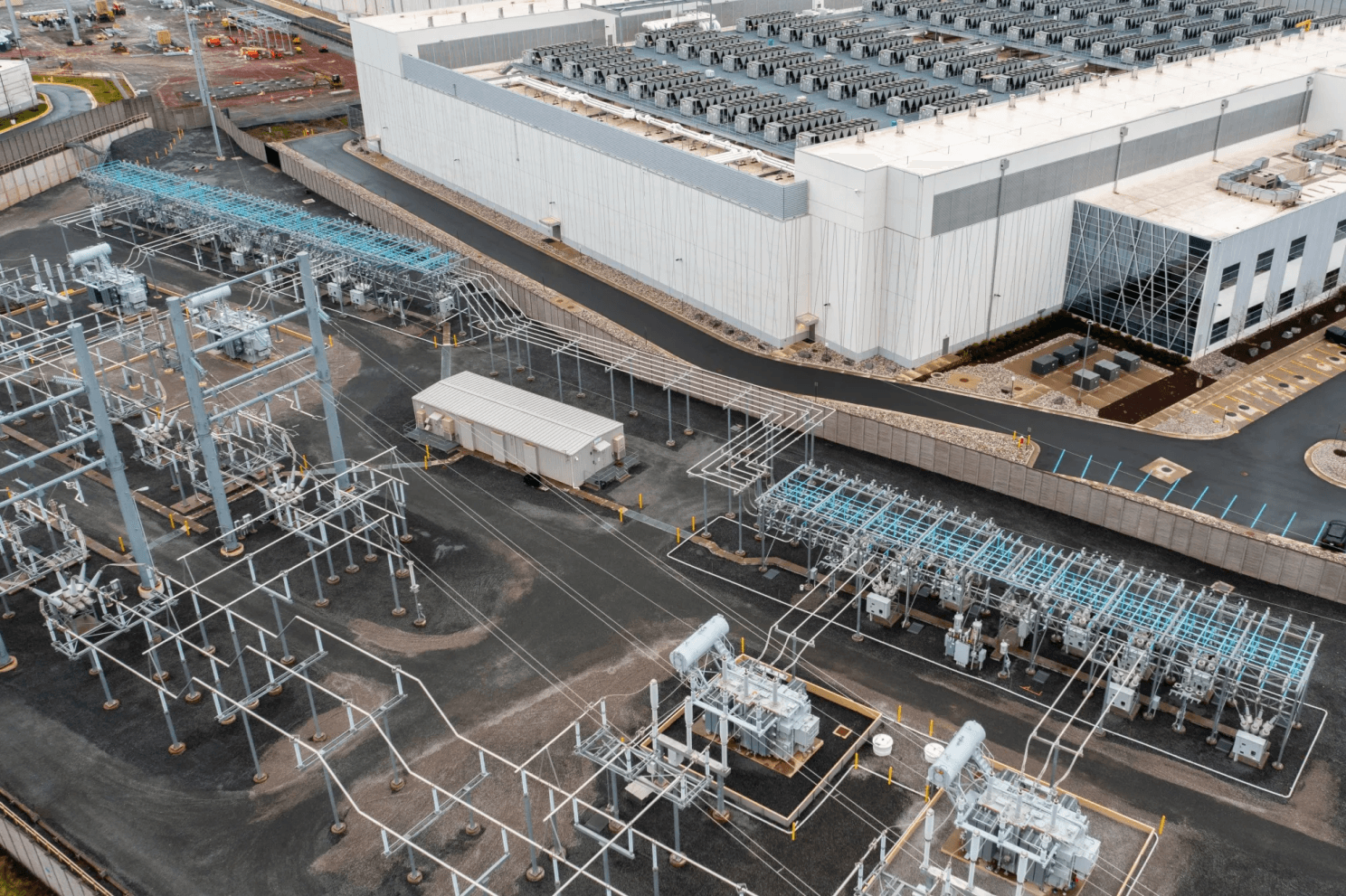

Reduced Grid Demand with DeepSeek

DeepSeek's energy-efficient design has far-reaching implications for power grids. AI data centers currently account for a growing share of global electricity consumption, often straining grids during peak demand periods. By requiring significantly less power to operate, DeepSeek could ease this burden, reducing the need for costly grid expansions or fossil-fuel-powered plants to support AI workloads. Furthermore, its efficiency opens the door for data centers to better participate in demand response programs, dynamically shifting workloads to off-peak hours and helping stabilize the grid during high-demand periods.

The Geopolitics Behind DeepSeek's Hardware

Politics and Export Restrictions

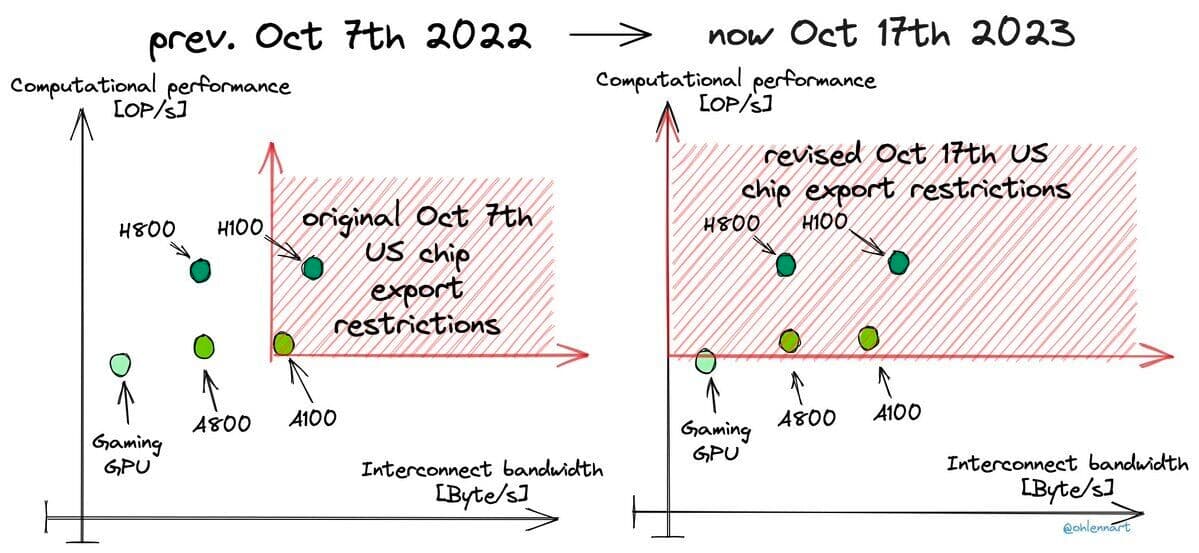

U.S. export restrictions on advanced AI chips, introduced in 2022 and tightened in subsequent years, aimed to curtail China's ability to develop cutting-edge AI technologies by limiting access to high-performance semiconductors like Nvidia's A100 and H100 GPUs.

These measures initially created significant challenges for Chinese AI developers, restricting their computational resources and increasing the cost and complexity of training large-scale AI models.

However, DeepSeek adapted by stockpiling restricted chips before the bans took effect, leveraging less advanced hardware through innovative software optimization, to bypass restrictions.

While the U.S. restrictions slowed China's access to state-of-the-art chips, they failed to fully suppress its progress in AI development, as Chinese firms demonstrated resilience and ingenuity in overcoming these barriers. China and other restricted importers could not obtain the powerful data center GPUs, ultimately driving this efficiency development.

Hardware

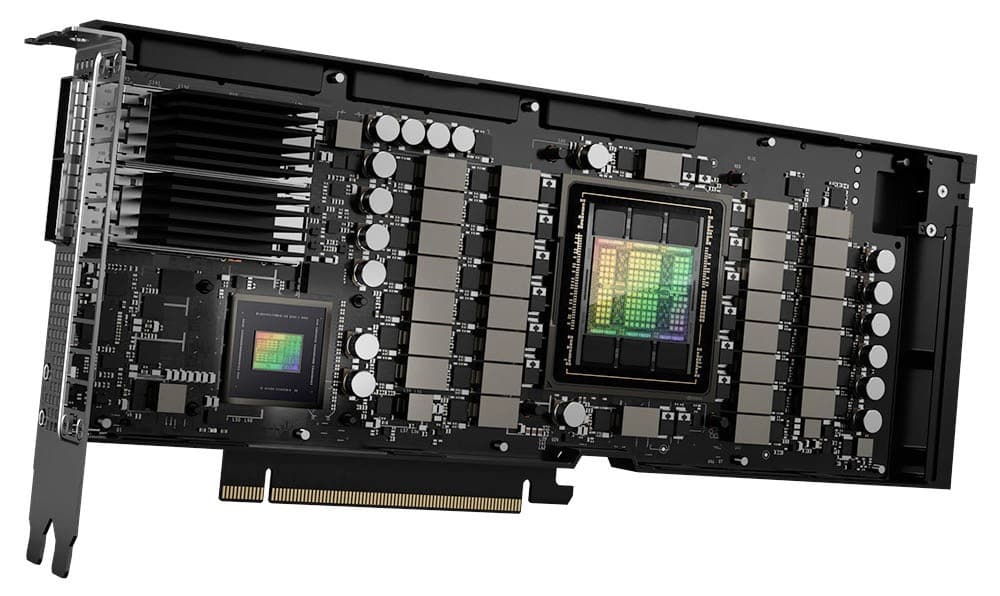

DeepSeek trained its V3 AI model using a relatively modest hardware setup compared to industry standards. It utilized a cluster of 2,048 Nvidia H800 GPUs. These GPUs are a capped version of Nvidia's H100 GPUs, with reduced FP64 performance and memory bandwidth.

Although the exact number isn't known, it is likely GPT-4 was trained using over 10,000 Nvidia H100 or Nvidia A100 GPUs.

Implications

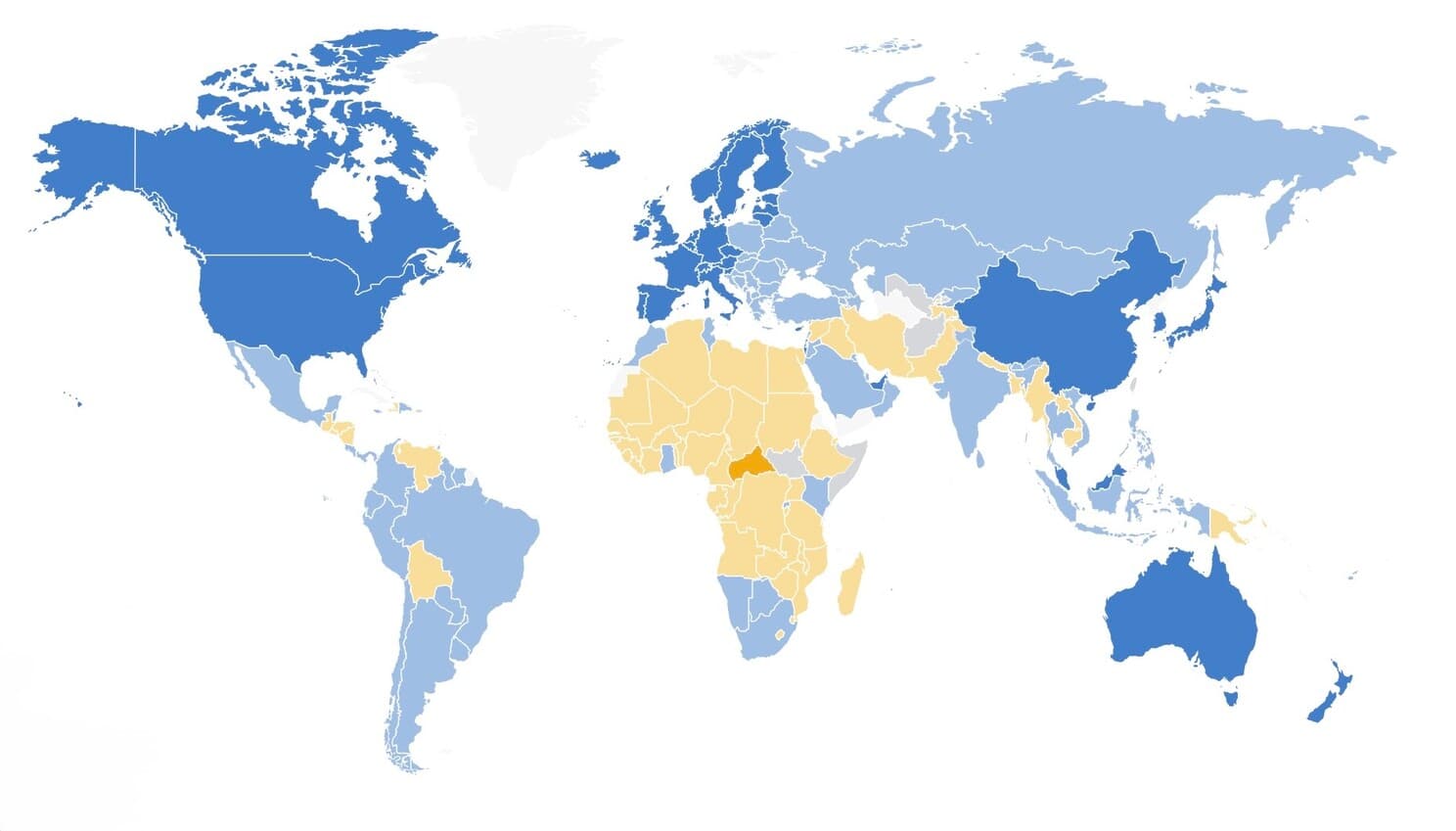

It's plausible that DeepSeek's emergence, alongside GPT-4 and other leading AI models could contribute to a more balanced marketplace with multiple suppliers, each catering to different priorities (e.g., raw computing power vs. energy efficiency). While massive organizations and nations with ample resources may still rely on top-tier GPUs and computational horsepower, those with constrained budgets or limited energy infrastructures might favor DeepSeek's leaner approach, effectively creating a bifurcated global AI ecosystem. In this environment, so-called "energy-poor" nations—or those more sensitive to sustainability concerns—would gravitate toward DeepSeek's costeffective and lower-energy designs, potentially leveling the playing field in AI development and allowing smaller states to compete in ways they couldn't before.

At the same time, this shift could compound geopolitical tensions. As more countries succeed with DeepSeek-like solutions, the traditional high-end GPU supply chain—dominated by the United States and its allies—would lose some leverage. Export restrictions and trade policies around cutting-edge chips might become less impactful if a significant slice of the market no longer needs that level of hardware. This dynamic, in turn, could spur new alliances around energy-efficient AI development or prompt renewed efforts to maintain hardware supremacy. Governments and tech giants might race to improve or acquire the IP necessary for next-generation, low-energy AI to ensure a strategic edge.

Regarding President Trump's muted response, there are a few ways to interpret it. Some might see it as a pragmatic acknowledgment that efforts to curb China's AI capabilities haven't fully stalled Chinese innovators—especially now that AI can thrive on less powerful hardware. Others might view it as a more conciliatory stance, indicating an interest in reducing trade friction if it appears that China can achieve progress despite U.S. export restrictions. Either way, it suggests an evolving climate where outright confrontation may give way to more nuanced, strategic maneuvering, especially if U.S. stakeholders recognize that AI is increasingly defined by algorithmic efficiency over brute-force chip power.

Ultimately, these developments underscore a future where AI extends beyond business applications and becomes a critical factor in international relations, resource allocation, and strategic alliances. As AI models become more accessible to lower-income nations and privatesector innovators alike, the technology's influence on global power structures—and diplomatic dialogues—may only deepen.

Summary

DeepSeek disrupts the AI landscape by offering performance on par with GPT-4 while slashing energy use by up to 90% and cutting emissions by 92%, all at a fraction of GPT-4's development cost. By focusing on algorithmic efficiency and optimized hardware usage, DeepSeek shows that powerful AI needn't rely on massive computational resources alone. Its emergence could reshape AI adoption worldwide, making advanced models accessible in regions with constrained energy or limited budgets. At the same time, DeepSeek's success in using less advanced hardware, combined with strategic chip stockpiling, demonstrates resilience against U.S. export restrictions, highlighting how innovation can bypass geopolitical hurdles. Looking ahead, DeepSeek's approach may broaden the AI market by balancing sustainability, affordability, and high-level performance.

Sources/Further reading

- DeepSeek: how a small Chinese AI company is shaking up US tech heavyweights

- DeepSeek AI Statistics and Facts (2025)

- China's DeepSeek and its Open-Source AI Models

- How Much Did It Cost to Train GPT-4? Let's Break It Down

- How China Is Advancing in AI Despite U.S. Chip Restrictions

AI disclosure

This article was researched and written with the help of Perplexity AI, one of my favorite AI apps.